What Changed and Why It Matters

AI teams are moving training and heavy inference to GPU clouds that run on cleaner, more flexible power. The driver isn’t PR. It’s unit economics and reliability.

GPU costs are surging. Power is now a hard constraint. Grids are stressed. The fastest path to capacity is renting GPUs where energy is abundant, cheap, and increasingly renewable. Investors are also rotating from app bets to infrastructure plays, signaling where value will accrue.

“Renewable energy, which is intermittent and variable, is easier to add to a grid if that grid has lots of shock absorbers that can shift with demand.”

This is the strategic unlock: AI workloads are schedulable. Training can flex to match wind, solar, hydro, or nuclear availability. Batteries and smart orchestration turn data centers into grid assets, not liabilities. Zoom out and the pattern becomes obvious: compute is following power.

The Actual Move

Here’s what ecosystem players are doing, in practice:

- Renting GPU-as-a-Service instead of buying racks. Short-term bursts for training; elastic scaling for peaks.

- Pinning jobs to regions with cleaner grids (hydro, wind, nuclear) and cheaper energy tariffs.

- Time-shifting non-urgent training to hours with high renewable output or lower prices.

- Pairing data centers with batteries to smooth power draw and prevent training stalls.

- Using secure GPU clouds to meet compliance while avoiding capex and supply chain risk.

- Pushing latency-sensitive inference to edge GPUs for real-time performance, keeping heavy training in flexible, renewable-rich zones.

“The compute function of AI training requires operational synchrony.”

That synchrony breaks if power blips. Batteries, smart scheduling, and high-speed interconnects keep thousands of GPUs in lockstep. Meanwhile, the industry’s biggest spenders are pouring capital into GPU capacity and power deals, accelerating the shift to where clean electrons are.

The Why Behind the Move

Analyze the shift through a builder’s lens.

• Model

Usage-based GPU clouds turn capex into opex. Power-aware orchestrators align training windows with renewable availability and spot pricing. The result: lower blended cost per token and fewer job interruptions.

• Traction

GPUs, built for parallelism, fit AI’s workload profile. As model sizes scale, the gains from optimized placement (right region, right time) outweigh marginal kernel tweaks.

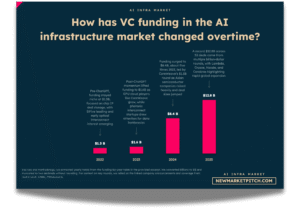

• Valuation / Funding

Capital is rotating to infrastructure: compute, interconnect, power. Massive commitments from hyperscalers and big tech are compressing supply and pulling startups toward rental markets and green regions.

• Distribution

GPU clouds, specialized providers, and marketplaces win on two vectors: fast access to scarce chips and proximity to cheap, clean power. Distribution isn’t an SDK—it’s capacity plus placement.

• Partnerships & Ecosystem Fit

Winners partner beyond tech: utilities, energy traders, battery providers, and data center operators. On the edge, OEM and platform ties matter for rolling out low-latency inference.

• Timing

Grid stress is now a feature of AI, not a bug. Flexible AI “factories” can absorb volatility from renewables. The timing favors teams that can schedule training to the grid, not against it.

• Competitive Dynamics

Hyperscalers offer scale; specialized GPU clouds compete on price, queue time, and green-region placement. Edge providers differentiate on latency and footprint. Compute locality vs. power arbitrage is the new trade-off.

• Strategic Risks

- GPU supply and interconnect constraints can nullify placement wins.

- Over-optimizing for green hours may increase job latency or complexity.

- Compliance and data gravity still pull some workloads on-prem.

- Grid interconnection delays and “paper green” claims risk credibility.

Here’s the part most people miss: the new moat isn’t just the model—it’s power-aware distribution of compute.

What Builders Should Notice

- Treat power as a first-class input to your training plan.

- Orchestrate jobs to renewable-heavy windows; measure cost per successful step, not only $/GPU-hour.

- Use GPU clouds to de-risk capacity, then regionalize for latency or data gravity.

- Pair reliability with batteries or preemption-tolerant training checkpoints.

- Partner early with data centers and utilities; capacity is a business development problem.

Buildloop reflection

The next AI advantage isn’t a bigger model. It’s smarter placement of compute.

Sources

- Arc Compute — Why Investors Are Shifting From AI Startups to AI Infrastructure

- NVIDIA Blog — How AI Factories Can Help Relieve Grid Stress

- Medium (CyfutureAI) — The Business Case for Moving AI Workloads to GPU Cloud Services

- LinkedIn (Pulse) — Compute Wars: Why AI Companies Are Burning Billions on GPUs

- E Source — Why are batteries becoming essential for AI data center load management?

- Tekleaders — How GPU as a Service Is Revolutionizing AI with Cloud Supercomputing

- LinkedIn (Ferroelectric Memory Company) — AI changed the nature of computing workloads almost overnight

- Carbon Credits — AI Demand to Drive $600B From the Big Five for GPU and Data Center Boom by 2026

- Scale Computing — Transforming Edge AI: How GPUs Deliver Real-Time Performance