What Changed and Why It Matters

The Pentagon moved to replace Anthropic as a core AI provider after a public standoff over acceptable use and safety posture. Coverage points to rising friction over clauses, governance, and mission scope.

This matters because defense buyers are standardizing around explicit usage commitments. Vendors now face a binary choice: accept broad, “all lawful purposes” mandates, or narrow access in line with safety guardrails—and live with the distribution trade-offs.

“All lawful purposes”

“Supply chain risk”

“Opens doors for smaller rivals”

Zoom out and the pattern is clear: AI procurement is fragmenting by policy stance, not just model quality. That shift creates risk for incumbents—and daylight for niche vendors with compliant stacks, tighter controls, and clear auditability.

The Actual Move

Here is the concrete sequence reflected across reports and posts:

- Anthropic previously secured a two-year, roughly $200M Department of Defense contract in mid-2025, positioning Claude for defense use under safety-forward constraints.

- Through early March 2026, debate intensified around frontier AI in intelligence, cybersecurity, and logistics. Procurement pressure rose to define broader mission scope and usage rights.

- The Pentagon began moving to replace Anthropic’s tools and labeled the vendor a “supply chain risk,” signaling a pivot to alternatives and multi-model resilience.

- Hours after reports of the Anthropic break, OpenAI finalized a Pentagon deal that affirms AI use for “all lawful purposes.”

- Media and community chatter framed the split as a door-opener for smaller AI vendors—especially those with compliant-by-default products, tighter logging, and on-prem or air-gapped options.

- Brand dynamics diverged: OpenAI faced user criticism over the defense deal, while Anthropic saw a lift in downloads, perceived trust, and enterprise interest, per marketing and GTM coverage.

The Why Behind the Move

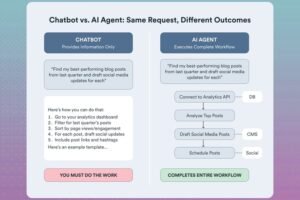

Founders should read this as a distribution and governance story masked as a model story.

• Model

Defense needs predictable behavior, policy control, and audit trails. General-purpose frontier models can work—but only if vendors accept enforceable usage clauses and operable guardrails.

• Traction

Anthropic’s public stance appears to have boosted consumer and some enterprise trust. OpenAI secured immediate distribution into defense workflows. Each optimized for a different trust surface.

• Valuation / Funding

No new financing details were disclosed across the coverage. The real currency here is contract access and policy leverage.

• Distribution

Government procurement is compliance-first distribution. Vendors that align on clauses, monitoring, and data residency will outpace technically stronger contenders who can’t—or won’t—sign.

• Partnerships & Ecosystem Fit

Expect integrators to push multi-model stacks to derisk vendor lock-in and policy whiplash. Smaller vendors with strong MLOps, evals, and deployment security can win via partnerships.

• Timing

Defense AI is crossing from pilots to production. That transition forces hard choices on scope of use, safety constraints, and accountability. Clauses are becoming the product.

• Competitive Dynamics

OpenAI captured the near-term slot. Anthropic gained brand equity with users skeptical of defense use. Smaller vendors now have clear lanes: classified deployments, on-prem control, robust logging, or narrower mission-tuned models.

• Strategic Risks

- For DoD: concentration risk and governance drift if oversight lags deployment speed.

- For OpenAI: user backlash and reputational cost outside defense.

- For Anthropic: lost contract revenue versus a stronger trust moat.

- For small vendors: long sales cycles, ATO hurdles, and the need to prove stability at scale.

What Builders Should Notice

- Trust is a moat—when it’s codified in product and policy, not just messaging.

- In regulated markets, your terms are part of your product. Treat them as features.

- Multi-model is the default for buyers with mission risk. Integrate, don’t isolate.

- Procurement favors verifiable controls: logging, evals, red-teaming, and rollback plans.

- Brand stance shapes distribution. Choose a lane and execute with conviction.

Buildloop reflection

“In AI, the spec isn’t just the model. It’s the contract.”

Sources

- Yahoo Finance — Pentagon’s ouster of Anthropic opens doors for small AI rivals

- Facebook — Pentagon bans anthropic and signs openai

- Reddit — Anthropic, a company actively trying to compete with …

- Forbes — Anthropic, The Pentagon And The New Battle Over Artificial …

- CBS Mornings (via Facebook) — Defense Sec. Pete Hegseth has given tech company …

- LinkedIn — The Anthropic-Pentagon Standoff: Your AI Vendor Risk …

- Digiday — Anthropic’s Pentagon refusal boosts downloads and brand …

- Fuzzy Labs — The Anthropic–Pentagon Showdown

- Yahoo Finance (Bloomberg) — Pentagon Moving to Replace Anthropic Amid AI Feud, …