What Changed and Why It Matters

DeepSeek ignited a global recalibration. Not just about benchmarks — about economics, geopolitics, and distribution.

It triggered market whiplash, a flood of commentary, and policy posturing. Headlines ranged from panic to pragmatism. Some investors questioned inflated AI premiums. Others doubled down on scale.

Here’s the part most people miss: the center of gravity shifted from raw parameter count to cost-quality efficiency and deployability. China leaned into this shift with scrappy developer hubs, GPU optimization, and fast-moving education channels.

Efficiency — not maximal scale — became the loudest signal. And efficiency compounds.

The Actual Move

Across sources, a clear pattern emerged in the year after DeepSeek:

- China’s developer engine accelerated. Hangzhou’s “coder village” became a focal point for AI building and hiring, symbolizing a bottom-up ecosystem push.

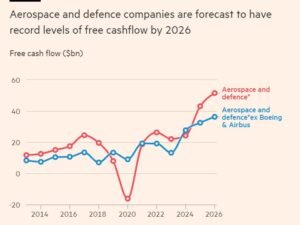

- Markets re-priced AI risk. Reports noted a multi-trillion-dollar swing as investors reassessed AI multiples amid claims that cheaper models could close the gap with incumbents.

- Big Tech and policymakers reacted unevenly. Commentary described U.S. federal science and tech policy as contradictory — torn between open research, national security, and industrial goals — even as the DeepSeek moment was used to argue for tighter alignment.

- Cloud and infra players emphasized GPU efficiency. Industry voices highlighted aggressive pooling and utilization techniques, with claims from Chinese cloud providers of sizable GPU savings.

- The early media frenzy cooled, but the build continued. On-the-ground reporting six months on described a more sober, steady acceleration — from K–12 AI education demand to training programs for developers.

The first wave was shock. The second wave was systems work: infra, talent, and distribution.

The Why Behind the Move

Zoom out and the pattern becomes obvious: DeepSeek reframed the game from “biggest model wins” to “fastest cost-to-quality compounding wins.”

• Model

Cheaper-to-train, cheaper-to-run models gained legitimacy. The frontier moved toward routing, compression, and smarter utilization — not just scaling laws.

• Traction

Grassroots developer networks and education pipelines expanded. Demand from parents and schools rose, creating a longer-term talent moat alongside immediate product velocity.

• Valuation / Funding

AI multiples looked fragile when cost curves shifted. A fast, efficiency-led entrant can compress incumbent margins — even before displacing products.

• Distribution

Winners prioritized practical deployment: model endpoints integrated into workflows, enterprise contracts, and local ecosystems. Distribution proved stickier than benchmarks.

• Partnerships & Ecosystem Fit

Cloud pooling, local GPU alliances, and education partnerships mattered more than splashy announcements. Small, compounding collaborations beat headline deals.

• Timing

Export controls and GPU scarcity forced innovation in utilization. Constraints bred ingenuity — and advantaged teams already optimizing for cost.

• Competitive Dynamics

Incumbents guarded margins with scale and brand. Challengers pressed on efficiency and local fit. The real fight moved to deployment economics and trust.

• Strategic Risks

- Policy whiplash and compliance burden

- Over-indexing on hype cycles and social-media claims

- Reliability and safety gaps in cheaper stacks

- Vendor lock-in at the infra layer

The moat isn’t the model — it’s cost-aware distribution under policy constraints.

What Builders Should Notice

- Build for constrained compute. Efficiency is a feature users feel.

- Distribution outruns benchmarks. Win the workflow, not the leaderboard.

- Policy is product surface area. Treat it like a dependency, not noise.

- Talent pipelines compound. Train your market while you build it.

- Prepare for repricing. Market mood can swing faster than roadmaps.

Buildloop reflection

In AI, the cheapest path to “good enough” wins—until trust decides the rest.

Sources

- Forbes — Panic Over DeepSeek Exposes AI’s Weak Foundation On Hype

- The Economic Times — The coder ‘village’ at heart of China’s latest AI frenzy

- LSE USAPP — Amidst the frenzy over DeepSeek AI, US federal science and tech policy is a mess of internal contradictions

- Fair Observer — FO° Exclusive: Chinese AI Startup DeepSeek Sparks Global Frenzy

- Facebook — China’s Deepseek AI outperforms ChatGPT in weeks

- Mindset AI — DeepSeek’s AI Breakthrough: Hype or Game-Changer?

- The Economist — Six months after DeepSeek’s breakthrough, China speeds on with AI

- LinkedIn — China’s innovation and infrastructure lead: A Singapore perspective

- Substack — ChinAI Newsletter | Jeffrey Ding

- UMU — How did the $2 trillion loss occur in relation to DeepSeek AI?