What Changed and Why It Matters

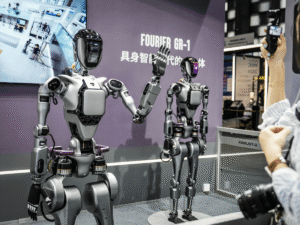

Robotics has a training data problem. Most models still break in the wild.

As Toloka puts it:

“Robotics models fail when training data doesn’t look like real life.”

Here’s the shift: manufacturing workflows, worker know‑how, and shop-floor telemetry are becoming the raw material for robot learning. Data now moves directly from human process to model weights.

Why now: plants are instrumented, annotation tools are robotics-aware, and teams are building continuous data loops from deployment back to training. Meanwhile, demand for skilled operators exceeds supply. Upskilling and automation are colliding into a new data flywheel.

The signal is everywhere—from industrial data capture to consumer task recordings. The market is quietly standardizing how we turn human skill and messy reality into machine-readable understanding.

The Actual Move

This isn’t a single product launch. It’s an ecosystem upgrade that connects shop-floor operations to robot learning loops.

- Data capture gets embedded on the floor. MachineMetrics shows why automated shop-floor data capture matters: less manual logging, more real-time machine signals flowing into analytics and control. The Manufacturer highlights real-time sensor analysis—vibration, acoustic emission, force, and current—to detect issues like chatter before quality slips.

- Annotation tools go beyond boxes. Label Studio reminds us that robotics labeling is about behaviors and affordances, not just pixels.

“Labeling isn’t just drawing boxes.”

Teams now tag actions, poses, success/failure states, and time-linked events across video, depth, and proprioception.

- Data marketplaces reach into homes and factories. A Reddit thread notes a program paying $50/hour to record everyday tasks—folding laundry, vacuuming. That’s a consumer-faced version of what industry is doing at scale: sourcing grounded task data rather than synthetic approximations. It’s unverified chatter, but it points to where the market is going.

- Workforce training gets reframed as dataset creation. RoboticsTomorrow cites studies where focused robotics training boosts productivity on automated tasks by over 70%. Training modules, SOPs, and guided work apps double as structured, labeled demonstrations for models.

- The automation backbone tightens. Sailotech and Tulip detail how shop-floor apps and MES/IIoT stacks standardize workflows, capture timestamps and operator inputs, and unify the context needed for AI to learn control policies. Tulip, in particular, shows how “operational foundation” work creates clean, queryable histories of how tasks are actually done.

- Standards emerge around manipulation. OLogic flags the core issue—training data is still the Achilles’ heel—and points to efforts like Stanford’s UMI aiming to normalize interfaces and data across robot platforms. Fewer bespoke formats, more reusable demonstrations.

- Quality control gets operationalized. Toloka outlines best practices for reality-first data collection, annotator guidance, inter-annotator agreement, and active learning loops—train, deploy, capture failures, label, retrain.

Put together, this is a production-grade pipeline: capture rich signals on the floor, structure them with robotics-aware labeling, push models, observe edge failures, and feed those misses back into the dataset. Repeat.

The Why Behind the Move

Robots don’t fail because of compute. They fail because of context. This move closes the context gap.

• Model

Real-world robotics needs temporal, multimodal data: video, force, current, operator inputs, environment states. Labeling now encodes actions and outcomes, not just frames. Active learning keeps models grounded in plant-specific edge cases.

• Traction

Manufacturers already run sensors and capture OEE. With Tulip-style apps and MachineMetrics-like connectivity, turning existing exhaust into training data is straightforward. It’s easier to scale data where processes are already standardized.

• Valuation / Funding

Data flywheels create durable enterprise value. Whoever controls ongoing, high-quality, consented demonstrations and outcomes data will command better performance and margins than model-only rivals.

• Distribution

The moat isn’t the model—it’s the install base. Vendors embedded in MES, work instructions, and machine connectivity become the default gateway for data-to-model loops. Distribution beats clever algorithms.

• Partnerships & Ecosystem Fit

Annotation platforms (Toloka, Label Studio) slot into IIoT stacks (Tulip, MachineMetrics), and integrators stitch it to robots. Standards like UMI reduce friction across hardware.

• Timing

- Plants are sensor-rich but under-modeled.

- Foundation models can learn from fewer but better demonstrations.

- Skills gaps are acute; training programs produce both capability and data.

- Enterprises are past pilots; they want measurable ROI.

• Competitive Dynamics

Expect two playbooks:

- Vertical specialists who own a task stack end-to-end (e.g., palletization, kitting, inspection) and capture pristine domain data.

- Horizontal platforms that standardize capture, labeling, and feedback across many tasks and vendors.

Winners will couple both: deep task mastery plus interoperable data rails.

• Strategic Risks

- Data rights and consent: who owns worker demonstrations and outcomes? Get this wrong and trust collapses.

- Privacy and safety: cameras and audio on the floor must be minimized and governed.

- Label drift and domain shift: processes evolve; stale labels degrade performance.

- Overfitting to one plant: portability depends on standards and diverse data.

- Change management: operators must see value, not surveillance.

What Builders Should Notice

- Design for loops, not launches. Ship, instrument, capture failures, and retrain.

- Your biggest lever is data specificity, not model novelty.

- Distribution lives in the work app. Own the workflow, own the dataset.

- Quality control is a feature. Measure label agreement and outcome lift.

- Consent and governance are product surfaces. Make them visible and simple.

Buildloop reflection

The future of robotics won’t be hand-coded. It will be recorded, labeled, and repeated.

Sources

- Toloka — How to build robotics training data that works in the real world

- RoboticsTomorrow — Addressing the Skills Gap in Manufacturing through …

- Reddit — Is anyone else noticing this? Robotics training data …

- Sailotech — Shop Floor Automation: The Backbone of Smart …

- Label Studio — The Rise of Real-World Robotics—and the Data Behind It

- The Manufacturer — Enhancing the shop floor with AI

- OLogic — Why Training Data is Still the Achilles’ Heel of Robotics

- MachineMetrics — The Benefits of Shop Floor Data Capture (And How to …

- Tulip Interfaces — Applying AI on the Shop Floor – Tulip Interfaces