What Changed and Why It Matters

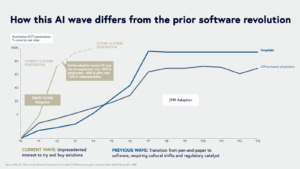

AI is forcing a reckoning with data quality. Years of neglected governance and inconsistent pipelines have compounded into “data debt” that now blocks AI ROI. CIOs are sounding alarms, and boards are finally paying attention.

“Data quality debt is AI’s hidden tax.”

“AI doesn’t fail because of bad models—it fails because of bad data.”

Two signals cut through the noise. First, enterprise leaders now frame data debt as an AI value killer, not a back-office nuisance. Second, the market is shifting from generic copilots to domain-specific data assistants built to find, fix, and govern messy data at scale. Precisely notes that 51% of data and analytics leaders cite data quality as their top integrity challenge. That pressure is moving budgets and priorities.

Here’s the part most people miss: AI is both the critic and the cure. It exposes data debt faster than ever—and it can increasingly help clean it up.

The Actual Move

The ecosystem is converging on AI-assisted data quality management as a first-class capability:

- Enterprise IT framing: CIO reporting highlights “data debt” as a board-level risk and a core AI blocker. The fix requires governance, lineage, and operational quality—not just better models.

- Market posture: Precisely argues the “data quality debt has come due,” pointing to years of deferred hygiene now colliding with AI expectations. Its materials and webinars push automated profiling, standardization, and remediation.

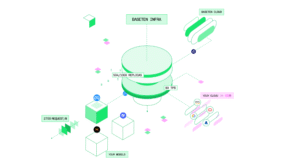

- Platform capabilities: Acceldata outlines how AI-driven data observability is redefining accuracy—continuous profiling, anomaly detection, and policy-driven governance to keep pipelines healthy in real time.

- Operating roles: Data stewardship is moving from support to center stage, with stewards coordinating domain standards, resolving quality incidents, and enforcing metadata discipline.

- Guardrails: Research on “digital debt” warns that assistants can help only if used carefully—transparency, human oversight, and clear provenance remain non-negotiable.

- Adjacent lesson: In engineering, AI-generated code can create technical debt in complex systems like IoT. The analogy holds for data: automation without standards multiplies debt.

- Emerging practice: AI tools are also improving debt detection itself—pattern-based issue finding, KPI tracking, and automated triage for data incidents.

“The data quality debt has come due.”

“AI assistants can help—but we must use them carefully.”

Bottom line: Vendors and teams are shifting from “build the model” to “fix the data,” with AI data assistants embedded into the data lifecycle—ingest, transform, validate, monitor, and govern.

The Why Behind the Move

AI that learns from flawed data compounds errors. Leaders are optimizing for trust, not just throughput.

• Model

Models are commodity. Clean, governed data is the differentiator. Assistants now target profiling, anomaly detection, lineage, and remediation—before training or inference.

• Traction

Enterprises feel the pain. A majority of leaders flag data quality as the top integrity gap. Teams adopt assistants that reduce incidents and time-to-detect.

• Valuation / Funding

Budget flows to platforms that turn AI promises into audited outcomes: fewer data breaks, faster fixes, and clear lineage. ROI shows up in reduced model drift and support tickets.

• Distribution

Winning tools live where data moves: warehouses, lakes, orchestration, and BI. Native connectors, policy packs, and low-friction workflows beat standalone dashboards.

• Partnerships & Ecosystem Fit

Tight integrations with Snowflake, Databricks, BigQuery, Airflow, and dbt are table stakes. Metadata platforms and catalog tie-ins amplify value.

• Timing

AI’s intolerance for bad data accelerates accountability. What was tolerable in BI is unacceptable in AI products exposed to customers.

• Competitive Dynamics

Copilots abound. The moat isn’t the model—it’s governance, domain context, and distribution into existing data ops.

• Strategic Risks

- Over-automation without standards creates new debt.

- Assistants that lack transparency erode trust.

- Fragmented tools increase observability blind spots.

What most people miss: quality is not a one-off project. It’s an operating system—codified by policy, enforced by automation, and owned by stewards.

What Builders Should Notice

- Make data quality a product requirement, not a clean-up task.

- Automate the boring parts: profiling, anomaly alerts, and policy checks.

- Assign data stewards with real authority and clear SLAs.

- Choose assistants that integrate natively with your data stack.

- Measure debt like tech debt: track incidents, time-to-detect, time-to-fix, and impact.

Buildloop reflection

AI compounds value only on trusted data. Ship the trust layer first.

Sources

- CIO — Data debt: AI’s value killer hidden in plain sight – CIO

- LinkedIn — Why Data Quality Debt Hurts AI Initiatives

- P3 Adaptive — Data Debt: Why AI Data Quality Matters

- LinkedIn — Data Quality Debt: Addressing Integrity Challenges in AI …

- Phys.org — Drowning in ‘digital debt’? AI assistants can help—but we …

- Precisely — Data Quality and the Rising Cost of Data Debt: Why AI Is …

- Towards Data Science — How AI Tools Generate Technical Debt in IoT Systems

- YouTube — Data Stewards: Conquering Data Debt in the Age of AI

- Acceldata — How AI Data Quality Management Is Redefining Accuracy …

- Cerfacs — The Impact of AI-Generated Code on Technical Debt and …