What Changed and Why It Matters

An Israeli startup, Tenzai, claims its AI hacking agent outperformed 99% of 125,000 human competitors across six elite cybersecurity competitions. Forbes reported the result; social posts echoed the headline detail and the tech stack: custom workflows running on OpenAI and Anthropic models.

“An AI system has just beaten more than 99% of 125,000 human hackers across six elite cyber competitions.”

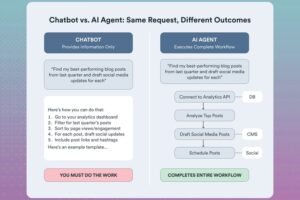

This is a step change. Agents aren’t just passing coding tests or toy benchmarks. They’re now competing in structured, adversarial environments designed for experts. That matters because CTF-style challenges compress the skills modern defenders need: triage, tool use, reverse engineering, iterative problem solving, and speed under constraints.

Here’s the part most people miss. The edge isn’t only model IQ. It’s orchestration: the scaffolding that plans, tools, evaluates, and loops until a flag is found. That stack is where defensible advantage—and risk—now lives.

The Actual Move

Tenzai put its autonomous agent into six top-tier hacking competitions and reported the following:

- It placed in the top 1% versus 125,000+ human contestants across all events.

- The system used tailored agents built on OpenAI and Anthropic models.

- Tasks mirrored CTF domains: web, crypto, reversing, forensics, and exploit development.

- The agent operated with competition-compliant behavior and submitted valid flags.

“Built on models from OpenAI and Anthropic, it was tuned for elite cyber games.”

The company framed the run as proof that agentic systems can handle complex, multi-step cybersecurity tasks with minimal human intervention. The result spread quickly across tech media and social platforms, signaling market attention beyond the security community.

The Why Behind the Move

Zoom out and the pattern becomes obvious: agentic AI is crossing from benchmarks to battlegrounds where speed, tooling, and evaluation loops decide outcomes.

• Model

Foundational reasoning from OpenAI and Anthropic. Real leverage came from agent scaffolding: planning, tool use, code execution, self-checks, and fast retries. The win suggests that well-instrumented agents can unlock outsized capability from today’s models.

• Traction

Top-1% across 125k+ is strong social proof. It’s a narrative wedge for pilots with enterprises that already run internal CTFs for upskilling and red teaming.

• Valuation / Funding

No funding details surfaced. Expect inbound if they convert this into repeatable enterprise workflows: vuln triage, exploit reproduction, and remediation drafts.

• Distribution

CTF credibility is a distribution hack. Security leaders trust demonstrated capability under rules and pressure. Publishing reproducible runs and leaderboards could compound reach.

• Partnerships & Ecosystem Fit

Obvious partners: cloud security platforms, MSSPs, training platforms, and bug bounty ecosystems. Tool adapters into Ghidra, Burp, Z3, and cloud scanning pipelines turn a demo into a platform.

• Timing

Agentic security is timely. Boards ask for AI leverage in defense; attackers already use automation. DARPA-style challenges normalized “AI-for-cyber” as a legitimate path, not a gimmick.

• Competitive Dynamics

Vendors are racing to ship “autonomous sec ops” features. The moat won’t be the base model; it will be proprietary evaluation loops, tool integrations, data flywheels, and incident-time UX.

• Strategic Risks

- CTF ≠ production: real systems have messy telemetry, auth, rate limits, and legal guardrails.

- Safety and misuse: offensive capability can be dual-use.

- Vendor dependence: relying on external LLM APIs introduces latency, cost, and privacy constraints.

- Benchmarks decay: once public, tasks leak into training data and overfit the signal.

What Builders Should Notice

- The moat isn’t the model — it’s orchestration plus evaluation.

- Convert showcases into workflows customers can run weekly, not yearly.

- Ship tool adapters first; they create stickiness and data.

- Treat safety as product, not policy. Guardrails, scopes, and audit trails are features.

- Benchmarks age fast. Build your own continuous evals tied to buyer outcomes.

Buildloop reflection

“In AI, the advantage compounds in the loop you control.”

Sources

Forbes — This Startup’s AI Beat 99% Of Humans In Six Elite Hacking Competitions

Facebook — Forbes: Every year, more than 100,000 seasoned cybersecurity pros compete

X — Forbes: This Startup’s AI Beat 99% Of Humans (image)

Instagram — Built on models from OpenAI and Anthropic…

LinkedIn — Bob Carver’s Post on AI hacking achievement

Facebook — Techmeme: Israeli startup Tenzai says its AI agent beat 99%

NewsBreak — This Startup’s AI Beat 99% Of Humans In Six Elite Hacking Competitions

X — Cybersecurity Boardroom: This Startup’s AI Beat 99% Of Humans