What Changed and Why It Matters

Founders are moving inference from cloud GPUs to small, rugged boxes at the edge. Think mini PCs, discrete GPUs, and new NPUs sitting next to cameras, sensors, and machines.

The trigger is practical: unit economics, latency, and control. Builders are realizing many workloads don’t need hyperscale GPUs if the model, data, and decision loop live on-site.

Here’s the part most people miss: compute follows data — not the other way around.

A founder in r/startups recently described ditching cloud GPUs for on-device optimization and got immediate cost relief. Meanwhile, industry blogs and hardware vendors are pushing purpose-built “AI mini PCs” for factories and surveillance. Even chip makers and startups are exploring GPU alternatives like compute-in-memory to break memory bottlenecks. The signal is consistent: edge is becoming a first-class deployment target.

The Actual Move

What’s happening on the ground:

- Bootstrapped founders are optimizing models for mobile and edge chipsets to avoid per-token or per-inference cloud costs.

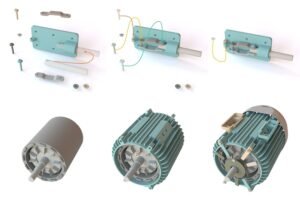

- AI mini PCs are being deployed across Industry 4.0: vision inspection, robotics, predictive maintenance, and smart surveillance — where milliseconds and on-site privacy matter.

- Hardware stacks vary: compact x86 mini PCs with consumer GPUs, embedded devices with NPUs/TPUs, and ruggedized boxes designed for dust, vibration, and heat.

- The hardware ecosystem is widening. Beyond GPUs, vendors tout associative or memory-centric processors to reduce data movement and energy.

- The market conversation now asks a blunt question: do you really need a GPU for every generative AI system, or can CPUs/NPUs handle right-sized models at the edge?

“Zero marginal cost: don’t pay for the compute; the user’s battery does.”

“Pocket‑sized AI PCs pack cloud power for real‑time edge computing and automation.”

“GPUs weren’t designed for AI.”

Compute-in-memory chips aim to “put compute in the memory itself” to bypass bandwidth limits.

The Why Behind the Move

• Model

Smaller, domain-tuned models now meet many edge tasks. Quantization and distillation unlock real-time inference on modest devices. The model isn’t the moat; the deployment and feedback loop are.

• Traction

Edge deployments show fast ROI where latency or privacy is existential: factory QA, retail loss prevention, logistics, and on-prem analytics. Real-time beats round-trip.

• Valuation / Funding

A founder trend: capital efficiency over vanity rounds. Media narratives point to startups declining easy money, citing falling cloud GPU prices and stronger open source. But the deeper move is cost control via on-device inference that scales with customers — not your AWS bill.

• Distribution

Winners bundle hardware, calibration, and ongoing model updates into a single SLA. Channel partners (OEMs, systems integrators, MSPs) carry these boxes into legacy workflows.

• Partnerships & Ecosystem Fit

Edge AI lives or dies on integration. Hardware vendors offer rugged mini PCs; software teams ship containerized models; SIs stitch into PLCs, VMS, and MES. The strongest teams make these pieces feel like one product.

• Timing

Open-weight models matured. GPU supply shocks eased. Regulators tightened on data movement. Egress fees remained stubborn. It’s the perfect window for edge-first playbooks.

• Competitive Dynamics

Cloud remains king for training and large-scale inference. But inference for many high-value tasks is local-first. Alternative silicon (compute-in-memory, NPUs) pressures the GPU hegemony, especially where memory bandwidth dominates.

• Strategic Risks

- Fleet ops are hard: updates, observability, and rollback at the edge.

- Thermal, power, and physical reliability can break SLAs.

- Fragmented hardware adds QA overhead.

- Underestimating TCO when support and on-site service scale.

- Vendor lock-in across GPUs, NPUs, and SDKs.

What Builders Should Notice

- Compute follows data. Put models where the signal originates.

- Small models win when they’re close, tuned, and continuously adapted.

- Bundle the box, the model, and the SLA — customers buy outcomes.

- Design offline-first. Build a clean remote-ops story from day one.

- Measure TCO, not just cloud bills: deployment, updates, thermals, support.

Buildloop reflection

The edge isn’t a place. It’s a decision to keep intelligence where value is created.

Sources

- Reddit — Bootstrapping AI: Why I ditched Cloud GPUs for On-Device …

- Forbes — “Seed-Strapped” AI Startups Are Refusing Millions

- LinkedIn — Lauri Piispanen – GPUs weren’t designed for AI.

- Medium — Pocket‑Sized Powerhouses: How AI Mini PCs Are …

- TechRadar — The associative processing unit wants to displace Nvidia’s …

- Premio Inc. — The Role of AI Mini PCs for Edge AI and Industry 4.0

- Network World — Startups pursue GPU alternatives for AI

- YouTube — Should You Always Use GPUs for Generative AI?

- TauroTech — Use of GPUs in Edge AI Computing