What Changed and Why It Matters

The center of gravity in AI has shifted from models to data operations. Simple, task-based labeling is fading. What replaces it is model-in-the-loop workflows, synthetic data, and domain-grade QA.

Yahoo Finance amplified a blunt claim from Turing’s CEO:

“The era of data-labeling companies is over.”

That doesn’t mean labels don’t matter. It means the old model—cheap, manual tagging at scale—can’t keep up with frontier systems. Costs are high. Bias sneaks in. Iteration lags product.

“76% of labels stay human—legacy tech.”

That line, shared in a LinkedIn post, captures the choke point. As models improve, so must the sophistication of labeling ops. Here’s the part most people miss: the next defensible moat isn’t architecture. It’s owning the feedback, labeling, and provenance loop.

The Actual Move

This moment is a pattern, not a press release. Across the ecosystem, three moves are converging:

- From manual to model-in-the-loop

- Reddit threads surface a clear shift: AI increasingly generates and labels its own data. Active learning, self-training, and evaluator models are moving from research to production.

- YouTube ops leaders (e.g., MLtwist’s COO) emphasize end-to-end process: gold standards, sampling, consensus, and continuous QA around model-assisted labeling.

- From generic labor to domain-grade supervision

- Substack coverage tracks the workforce evolution—from low-cost stop-sign tagging to specialists who understand law, healthcare, finance, and nuanced edge cases.

- Damco’s enterprise guides focus on governance: annotation guidelines, inter-annotator agreement, drift monitoring, and privacy.

- From labels to provenance

- Fortune reports the friction of labeling AI-generated content using C2PA credentials. It’s hard in the wild, but it’s becoming table stakes for trust and compliance.

Another thread: control. A strategy note from Heroik Media frames a broader chokepoint—platforms throttle usage at the interface. If you don’t own your data and labeling ops, you’re building on other people’s gates.

“Labeling AI-generated content is not as easy as it seems.”

The signal is consistent: labeling is no longer a commodity function. It’s a core, integrated capability.

The Why Behind the Move

Founders aren’t optimizing for cheaper labels. They’re optimizing for faster learning loops, safer outputs, and durable moats.

• Model

Frontier performance depends on high-signal labels: adversarial examples, nuanced edge cases, evaluator rubrics, and RLAIF/RLHF workflows. Model-assisted pipelines reduce noise and accelerate iteration.

• Traction

Speed wins. Owning labeling ops cuts cycle time from weeks to days. It enables rapid red-teaming, content safety tuning, and product-specific datasets.

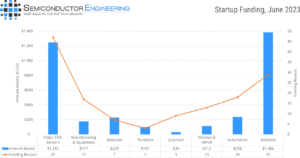

• Valuation / Funding

Investors now ask about data moats, not just benchmarks. A defensible ops stack—guidelines, QA, evaluators, and provenance—compounds value across models.

• Distribution

Labeling ops wired into the product telemetry becomes a compounding engine. Every user interaction sharpens the model.

• Partnerships & Ecosystem Fit

Pure-play labeling vendors fade unless they offer model-in-the-loop, domain experts, and tight QA. The winners become co-owners of the learning loop, not task brokers.

• Timing

Regulation and platform policies push provenance. The cost curve pushes automation. The talent curve pushes specialization.

• Competitive Dynamics

When model access commoditizes, data differentiation dominates. Owning labeling ops becomes the quiet wedge.

• Strategic Risks

- Over-automating can amplify bias.

- Weak guidelines destroy consistency.

- Synthetic data can drift from reality.

- Provenance gaps invite compliance risk.

What Builders Should Notice

- Own the feedback loop. Tie product telemetry to model-in-the-loop labeling and QA.

- Treat guidelines as code. Version, test, and measure annotator agreement.

- Use synthetic and self-labeling—then validate with evaluator models and domain experts.

- Make provenance a feature, not an afterthought. C2PA and audit trails are trust levers.

- Don’t outsource your moat. Partners help, but the learning loop must be yours.

Buildloop reflection

“In AI, models generalize. Moats operationalize.”

Sources

- Yahoo Finance — ‘The era of data-labeling companies is over,’ says the CEO …

- Medium — Why Data Labeling Has Become the New Battleground in …

- LinkedIn — How manual labeling is choking AI progress and costs.

- Damco Group — Data Labeling Challenges & Strategic Solutions for AI …

- Reddit — Data Labeling Is the Hot New Thing in AI | The race to build …

- Heroik Media — AI’s Big Choke Point: How To Ditch the Gatekeepers & Unlock …

- DataGravity — The Future of Data Labeling: From Stop Signs to AI Specialists

- YouTube — Managing Data Labeling Ops for Success with Audrey Smith …

- Fortune — Labeling AI-generated content is not as easy as it seems

- GitHub — My personal insights using Rave (training compendium)