What Changed and Why It Matters

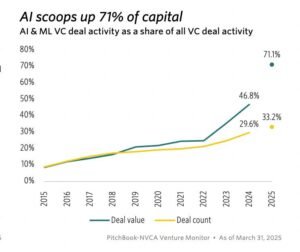

Investor mood is shifting from euphoria to scrutiny. Hardware spend has surged. Now the market wants proof the AI flywheel actually spins.

“Investors had grown weary that the massive run-up in spending on AI hardware might not be sustainable, stoking fears of a bubble…”

Cheap debt is back. Big Tech is issuing bonds to finance multi-year AI buildouts, extending runway without tapping equity.

“Big Tech is on a bond binge to fund its AI bets… OpenAI and AMD have signed a multi-billion-dollar deal, under which AMD will supply hundreds of thousands of AI chips to OpenAI…”

Meanwhile, founders are racing to capture proprietary inputs—speech, gaze, biometrics, environment—before models commoditize.

“They’re building proprietary data moats around biometric input that no foundation model can replicate.”

Here’s the part most people miss: the moat isn’t the model; it’s the capture of unique, hard-to-reproduce input streams at scale.

The Actual Move

Several concrete signals converged this week:

- Financing: Axios reported a Big Tech bond binge to fund AI expansion—keeping cash flexible while locking low rates.

- Supply diversification: Reports of a multi-billion-dollar AMD–OpenAI chip supply agreement point to a hedging strategy beyond one vendor.

- Sentiment whiplash: CNBC flagged investor fatigue with unchecked AI capex and rising bubble talk.

- Early-stage heat: On X, investors discussed a startup in talks to raise at a $2B valuation led by Peter Thiel—evidence that selective premium pricing persists.

- Strategy discourse: Industry voices emphasized proprietary, biometric-grade inputs as the next durable moat.

- Analyst lens: Breaking Analysis (theCUBE Research) continues to track data center capex and AI infrastructure adoption patterns—useful context for real demand vs. narrative.

The Why Behind the Move

Founders and operators are “collaring” risk—pairing aggressive AI bets with downside protection.

• Model

Foundation models converge in capability. Durable advantage shifts to data generation and input harvesting: sensors, wearables, enterprise workflows, and ambient capture.

• Traction

Distribution that passively collects high-fidelity streams wins. Think voice, vision, on-device context, and continuous telemetry embedded in real work.

• Valuation / Funding

Cheap debt lets platforms scale infra without immediate dilution. Select startups still command $2B+ valuations—when they show clear data access or distribution.

• Distribution

Own the input surface. LLM access is a commodity; controlling capture points is not. Hardware, SDKs, and default integrations matter more than model knobs.

• Partnerships & Ecosystem Fit

AMD–OpenAI signals a broader supplier set and negotiation leverage. Expect more multi-vendor strategies across compute, networking, and memory.

• Timing

Rate stability plus market FOMO creates a window to lock capital and inventory. Miss it, and you face pricier chips and tighter terms.

• Competitive Dynamics

NVIDIA dependence is a risk. Multi-sourcing and pre-buys reduce exposure. Meanwhile, startups differentiate via proprietary datasets, not bespoke models.

• Strategic Risks

- Overbuild and idle capacity if demand lags

- Privacy and policy friction around biometric capture

- Rising CAC if input capture requires new hardware

- Narrative risk: hype cycles drive misallocation

What Builders Should Notice

- The moat is upstream. Own input capture, not just inference.

- Finance is strategy. Debt can extend runway for infra-heavy bets.

- Supplier diversity is a feature. Hedge compute concentration early.

- Ship capture, not just chat. Design for continuous, permissioned data.

- Auditable ROI beats vibe. Stress-test assumptions with real usage data.

“Sophisticated investors must use data to stress-test their assumptions before market conditions turn.”

Buildloop reflection

In AI, owning the inputs is owning the outcome. Capture compels compounding.

Sources

- CNBC Tech — Investors had grown weary that the massive run-up in …

- TheCUBE Research — Breaking Analysis

- X (formerly Twitter) — Gavin Purcell (@gavinpurcell) / Posts

- LinkedIn — AI Bubble Forms as Big Tech Overinvests in Data Centers

- Axios — Big Tech is on a bond binge to fund its AI bets, and …

- University of Texas System — 1935 Minutes

- AITRAP — AITRAP — AI hype Tracking Project

- U.S. Government Publishing Office — HOUSE OF REPRESENTATIVES

- Tempo Funding — Media

- Value Ventures — Where Compounding Knowledge Meets …