What Changed and Why It Matters

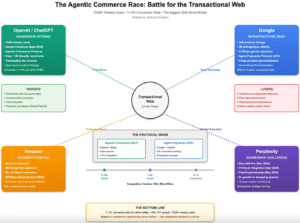

AI agents aren’t browsing like humans. They need structured, fresh, and programmable access to the web. That shift is now shaping new infrastructure.

Parallel Web Systems is building search and tools for AI agents, not people. Their bet: the web’s “primary user” is changing — and infra must change with it.

“We started Parallel Web Systems because the primary user of the web is changing: from humans to AIs.”

This isn’t just a product update. It’s a directional signal. As parallel, multi-agent workflows spread, builders need a web that’s fast, composable, and trustworthy. The ecosystem agrees: parallel agents are rising, but they need purpose-built plumbing.

The Actual Move

Parallel is positioning itself as “infrastructure for intelligence on the web.” Their site emphasizes a suite of agent and tool APIs with enterprise controls, including SOC‑2 Type II.

Parallel “develops a suite of agents and tool APIs for building AI with powerful access to the open web.”

The company introduced an agent-first search product designed for developers.

It “gives AI agents fast, accurate, and always-fresh web search capabilities, delivering AI agents exactly the context they need.”

On November 6, 2025, Parallel launched the Parallel Search API, described as “designed specifically for AI agents.” The pitch centers on speed, freshness, and developer ergonomics — the core needs of agentic systems.

Strategically, Kleiner Perkins announced a Series A investment in Parallel, framing the mission in market terms.

Parallel’s mission is to “keep the web open, transparent, and competitive as it transitions to its next user.”

Around the ecosystem, the “parallel agents” pattern is gaining ground:

“Parallel AI agents are redefining the entire workflow for modern software development.”

“Parallel agents work well for smaller well-defined tasks and backend logic … [but] struggle with complex architectural decisions.”

Hands-on builders echo both the upside and the guardrails:

“It runs multiple AI agents in parallel on your laptop and lets them work on different tasks at the same time.”

“You need to manage an AI coding agent carefully and course correct frequently.”

Together, these signals point to a clear need: web infrastructure built for concurrent, tool-using agents — not human browsers.

The Why Behind the Move

• Model

Parallel is an infrastructure company. The core product is a developer API that gives agents programmable access to the live web. The value is speed, structure, citations, and control — the inputs agents need to reason reliably.

• Traction

Community threads show active demand for parallelized agent workflows and purpose-built search. Practitioners are hacking this locally; teams now want robust, hosted infra with guardrails and freshness.

• Valuation / Funding

Kleiner Perkins’ Series A validates the “web is changing users” thesis. It also buys time to build crawling, ranking, deduping, compliance, and enterprise features — the hard, unsexy moat in search infra.

• Distribution

Developer-first motion: simple APIs, clean docs, and easy integration into agent frameworks. SOC‑2 Type II widens the top of funnel to security-conscious teams.

• Partnerships & Ecosystem Fit

Best fit with agent stacks, RAG/knowledge products, and ops tools that need trustworthy external context. Expect integrations with orchestration frameworks, eval suites, and enterprise data pipelines.

• Timing

Agentic patterns are maturing from demos to workloads. As teams parallelize tasks, bottlenecks move to web I/O: rate limits, freshness, quality, and provenance. The timing is right to own this layer.

• Competitive Dynamics

Incumbent search targets human UX. General web APIs lack the agent-first contract: structured outputs, consistent freshness SLAs, and compliance. Specialized agent search can win on developer experience and reliability, not brand.

• Strategic Risks

- Content access: robots.txt, paywalls, and licensing can constrain coverage.

- Quality: deduplication, spam, and source ranking remain hard problems.

- Safety and provenance: agents amplify errors if citations are weak.

- Economics: web-scale crawling costs can outpace early revenue.

- Orchestration complexity: parallel agents can thrash without good task design.

What Builders Should Notice

- Build for agents, not humans: structured, cited, fresh outputs win.

- Speed compounds: sub-second search boosts agent reliability and cost.

- Trust is a moat: SOC‑2, provenance, and safe crawling unlock enterprise.

- Start narrow: parallelize well-scoped tasks before architecture-level work.

- Measure everything: add evals, timeouts, and quality scoring to agent loops.

Buildloop reflection

Every market shift starts when someone optimizes for the next user — not the current one.

Sources

- Kleiner Perkins — Parallel: Building the infrastructure for AI

- Parallel Web Systems — Introducing Parallel | Web Search Infrastructure for AIs

- Reddit — I built a system where AI agents work in parallel while you …

- Medium — Parallel AI Agents: The Next Wave Transforming Software …

- Department of Product — What is parallel AI agent coding? An in-depth guide for …

- Reddit — Parallel Search API Launch: Revolutionizing AI Agent Web …

- Parallel Web Systems — Parallel Web Systems | Infrastructure for intelligence on the web

- LinkedIn — Introducing Parallel Web Systems: A New Era for AI …

- Hacker News — Parallel AI agents are a game changer