What Changed and Why It Matters

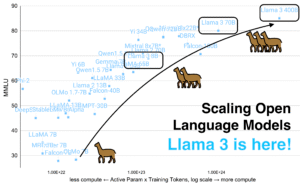

A clear pattern is forming across the AI ecosystem: cost pressure is forcing a new stack. The winning setup centralizes models behind one API, adds orchestration, and bakes in caching, security, and per-call spend controls.

“Platforms could slash integration costs by up to 80%, foster resilience against supplier lock-in.”

That line captures the shift. Aggregation and orchestration are beating one-off integrations. Teams are routing tasks to cheaper models, caching aggressively, and only escalating to premium models when needed.

“Drive cache hit rates up to 80%, returning results in milliseconds while slashing API bills.”

Zoom out and the pattern becomes obvious: the control plane matters more than any single model. Google Research recently showed that centralized orchestration can deliver roughly 80% higher agent performance than single-agent systems. That’s not a feature—it’s an architecture.

The Actual Move

Here’s what builders are actually doing, based on what we’re seeing across posts, research, and case studies:

- One-API aggregation to reduce lock-in and simplify integrations. Teams plug into a single interface, not ten.

- Per-call tracking and smart caching to crush token spend. Prompt and response caches return results in milliseconds.

- Multi-model routing so cheap, fast models do most work. Premium models are reserved for hard cases.

- Centralized orchestration to coordinate agents, tools, and evaluations. Performance rises, chaos drops.

- Security and policy layers that keep pace with shipping speed. Think guardrails, audit, and model-agnostic controls.

- Enterprise stack upgrades: agents, fine-tunes, and custom silicon to hit ROI targets, not just demos.

“80% of what my agents do runs on cheaper, faster models. The expensive model only comes out when the task actually requires it.”

Inside real teams, this looks pragmatic. Craft Docs’ fast AI makeover shows how a focused, agentic layer can rewire product velocity. Meanwhile, buyers are waking up to costs beyond API fees—process change, data plumbing, MLOps, and compliance.

“This AI agent will automate 80% of our manual invoicing tasks, reducing operational costs by $2M annually.”

Here’s the part most people miss. The dollar savings show up when you redesign the workflow, not when you swap one model for another.

The Why Behind the Move

Teams aren’t chasing novelty. They’re buying control.

“Every CEO has the same mandate right now: ship AI features faster. Improve efficiency. Cut costs. Use Claude, use GPT, use whatever works.”

• Model

- Treat models as commodities behind an orchestrator. Route by task, latency, and price.

- Cache prompts and responses to minimize re-compute.

• Traction

- Orchestrated, cached pipelines show step-function latency and cost gains.

- Agent performance improves with centralized control and eval loops (~80% gains reported in research summaries).

• Valuation / Funding

- Aggregators and control-plane vendors accrue durable value if they own routing, policy, and observability.

- Value concentrates where spend is governed, not where tokens originate.

• Distribution

- “One API” is easy to trial and integrate. Procurement favors vendor-agnostic control planes.

- Cost dashboards and per-call SLAs become the new demo.

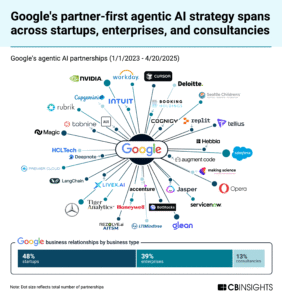

• Partnerships & Ecosystem Fit

- Works best when paired with security stacks for policy, audit, and data controls.

- Tight integrations with hyperscalers, vector DBs, and feature stores amplify impact.

• Timing

- 2026 budgets are scrutinizing AI line items. Control beats experimentation at scale.

- Custom silicon and managed agents from clouds make orchestration even more valuable.

• Competitive Dynamics

- Hyperscalers bundle end-to-end stacks; independents win on neutrality and speed.

- The moat isn’t the model—it’s distribution, policy, and total cost control.

• Strategic Risks

- Cache staleness or misrouting can degrade quality.

- Security, PII handling, and audit gaps invite regulatory risk.

- Over-optimizing for cost can quietly erode accuracy and user trust.

What Builders Should Notice

- Cost control is an architecture, not a procurement trick.

- Centralize decisions: one API, one router, one policy layer.

- Cache first. You don’t need a token you don’t spend.

- Route 70–90% of tasks to cheap models; escalate on evidence, not vibes.

- Measure unit economics by workflow, not by model.

Buildloop reflection

“AI rewards speed—only when paired with spend discipline.”

Sources

Commercial Appeal — The 2026 AI Cost Crisis: The Rise of One API Aggregation Platforms and Their Potential to Deliver 80% Savings

LinkedIn — Cutting AI costs with per-call tracking and smarter …

Medium — Introducing AIOStack: AI Security That Moves at the Speed …

Elevate (Substack) — The 80% Problem in Agentic Coding – by Addy Osmani

Decoding Discontinuity — The 80% Rule: Who Captures Value When AI Does the Work

Facebook Groups — Controlling ai agent token costs is crucial for business

Bain & Company — The New AI Stack: Speed, Scale, and Real-World ROI

LinkedIn — AI Cost Trap: Beyond API Fees | Jeff Groves

The Pragmatic Engineer — Inside a five-year-old startup’s rapid AI makeover

Symphonize — Costs of Building AI Agents: What Decision Makers Need to Know