What Changed and Why It Matters

OpenAI launched GPT‑5.3‑Codex‑Spark, a coding model that runs on Cerebras chips. It’s the company’s first production model to operate on non‑Nvidia silicon.

The change is about latency and supply. Multiple reports cite “near‑instant” responses and up to 15x faster code generation. That kind of speed shifts developer behavior and lowers context‑switch costs. It also signals a multi‑silicon future for AI workloads.

Zoom out and the pattern becomes obvious: inference is fragmenting across specialized accelerators, and workloads with tight latency budgets will move first.

OpenAI’s new coding model targets “near‑instant” responses and up to 15x faster code generation, powered by Cerebras accelerators.

The Actual Move

Here’s what OpenAI did, across sources:

- Launched GPT‑5.3‑Codex‑Spark, a fast, coding‑focused model designed for ultra‑low latency.

- Deployed it on Cerebras Systems’ wafer‑scale accelerators, marking OpenAI’s first model to run on Cerebras hardware rather than Nvidia GPUs.

- Framed the win as speed: coverage highlights “near‑instant” interactions and claims of up to 15x faster code generation.

- Positioned the model to handle more than simple completions, with coverage noting broader coding tasks and faster iteration loops.

- Anchored by earlier reporting of a multi‑billion‑dollar OpenAI–Cerebras deal, signaling longer‑term diversification of compute supply.

Here’s the part most people miss: this isn’t just a chip story. It’s a UX story. Latency is product.

The Why Behind the Move

• Model

A specialized Codex‑class model optimized for fast inference. Smaller, tighter loops. Tuned for responsiveness over raw frontier breadth.

• Traction

Coding is a high‑frequency, latency‑sensitive workflow. “Instant” feels 10x better than “fast.” That drives daily active use, stickiness, and willingness to pay.

• Valuation / Funding

Earlier reports of a multibillion OpenAI–Cerebras commitment reduce single‑vendor risk and secure capacity. Cheaper or more reliable latency can improve unit economics at scale.

• Distribution

If this model sits behind popular coding assistants and APIs, speed becomes a default expectation. Distribution turns speed into a moat.

• Partnerships & Ecosystem Fit

Cerebras gains marquee validation. OpenAI gains an alternative supply chain and leverage in future negotiations. The ecosystem gains proof that top‑tier models can run well off‑GPU.

• Timing

Inference demand is exploding. Builders need predictable latency. Launching a low‑latency coding model now converts capacity bets into immediate user impact.

• Competitive Dynamics

Nvidia still dominates, but the door is open for task‑specific silicon. Expect more “workload‑first” pairings: code, retrieval, and streaming agents on accelerators tuned for their patterns.

• Strategic Risks

- Real‑world performance must match controlled claims.

- Software tooling and ops maturity on non‑GPU stacks can lag.

- Portability tax: maintaining parity across heterogeneous hardware adds complexity.

- If speed is the headline, quality regressions will be unforgiving.

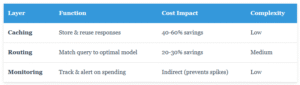

What Builders Should Notice

- Latency is leverage. Faster loops beat bigger models in daily workflows.

- Specialize the stack to the job. Hardware–model co‑design is back.

- Diversify compute early. Vendor optionality compounds pricing and resilience.

- Ship where it hurts most. Fix the bottleneck users feel every minute.

- Moats move up‑stack. Distribution turns performance into habit.

Buildloop reflection

Every market shift begins with a quiet product decision: make it instant.

Sources

- Bloomberg — OpenAI Debuts First Model Using Chips From Nvidia Rival …

- The Register — OpenAI unveils first model running on Cerebras silicon

- VentureBeat — OpenAI deploys Cerebras chips for ‘near-instant’ code …

- SiliconANGLE — OpenAI’s rapid GPT-5.3-Codex model moves beyond …

- Reddit — OpenAI deploys Cerebras chips for 15x faster code …

- TechBuzz.ai — OpenAI Partners with Cerebras on New Codex-Spark Chip

- AASTOCKS — OpenAI Releases 1st AI Model Using Chips from …

- X (formerly Twitter) — BREAKING: OpenAI just launched its first AI model running …

- AI Business — Cerebras Poses an Alternative to Nvidia With $10B OpenAI …