What Changed and Why It Matters

AI agents are moving from demos to production. The failures aren’t in the models. They’re in the data layer: quality, access, governance, and security.

Security teams are flagging new attack surfaces. Browser agents can expose credentials. IDE extensions can leak code and secrets. Meanwhile, builders keep discovering the same root cause of hallucinations: weak, ungoverned data and brittle retrieval.

Here’s the part most people miss. Agents amplify whatever data and permissions you give them. Good data compounds accuracy. Bad data compounds risk.

Agents don’t fix bad data; they amplify it.

The Actual Move

Ecosystem voices are converging on the same point: the data foundation is the constraint.

- Data platform teams argue the weakest link in GenAI is quality at scale. They push for AI-ready data layers: cataloging, lineage, masking, and continuous evaluation.

- Agent platform builders highlight accuracy as the failure mode in agentic architectures. Understanding instructions isn’t enough without grounded, relevant context.

- Security researchers warn that browser-based agents can leak credentials and sensitive data. IDE extensions are becoming a major supply-chain risk for coding agents.

- Practitioners caution that most AI POCs fail due to missing data foundations. Agents won’t replace data lakes or warehouses; they depend on them.

- Risk teams point out prioritization still breaks in vulnerability management. Dashboards grow; remediation lags. The same pattern appears in AI: lots of signals, not enough action.

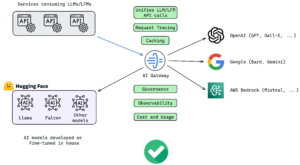

The market is reacting in three ways:

1) Building AI data platforms: unified metadata, policy enforcement, retrieval observability, and test harnesses for RAG and agents.

2) Hardening agent surfaces: sandboxed browsing, least-privilege tool use, secret isolation, and adversarial evaluation.

3) Putting ops around accuracy: continuous evals, passage attribution, guardrails, and abstention policies built into the loop.

The data layer is the product.

The Why Behind the Move

• Model

Model quality is good enough for many tasks. Accuracy now hinges on retrieval, data quality, and policy.

• Traction

Teams see ROI when they fix pipelines, not just prompts. Clean schemas and grounded context beat model swaps.

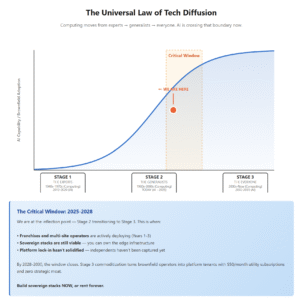

• Valuation / Funding

Capital is flowing to infra that reduces AI risk and improves reliability. Platforms that make agents safer and more accurate win buyer trust.

• Distribution

Winners integrate where work already happens: data clouds, IDEs, browsers, and ticketing systems. Frictionless connectors beat greenfield tools.

• Partnerships & Ecosystem Fit

Expect deep hooks into identity providers, data catalogs, vector databases, SIEM/EDR, and cloud policy engines. “Bring your stack” is becoming table stakes.

• Timing

As agents gain tools and autonomy, the cost of a mistake rises. Security incidents and compliance pressure are pulling the future forward.

• Competitive Dynamics

Differentiation is shifting from model access to trust: governance, observability, and provable accuracy. The moat isn’t the model — it’s the distribution of safe, reliable outcomes.

• Strategic Risks

- Platform bloat without measurable accuracy gains

- Over-permissioned tools and credential sprawl

- Privacy and regulatory exposure from ungoverned retrieval

- Vendor lock-in to a single model or vector store

Build for verification, not vibes. Attribution, evals, and policies are your safety net.

What Builders Should Notice

- Treat your data layer like a product: schemas, lineage, SLAs, and owners.

- Ground agents with verified context. Measure retrieval quality, not just response quality.

- Sandboxed tool use by default. Least privilege. No long-lived secrets inside agents.

- Ship an eval loop. Red-team with adversarial prompts and track regression like tests.

- Prioritize fixes, not findings. Tie accuracy and security signals to workflows.

Buildloop reflection

Accuracy is an ops problem disguised as a model problem.

Sources

- Accelario — How AI Data Platforms Solve Gen AI’s Weakest Link

- AppOrchid — The Weak Link in Agentic Architectures: Accuracy

- Knostic AI — IDE Extensions: The Weakest Link in AI Coding Security

- Security Boulevard — Browser AI Agents: The New “Weakest Link” that Can Feed Your Credentials and Data to Attackers

- LinkedIn — Fix Website Infrastructure Before AI Investments

- Your Everyday AI — From Automation to Agents: Why Weak Data Makes AI Guess

- Seemplicity — Why Prioritization Is Still the Weak Link in Vulnerability Management

- Medium — Can AI agents replace the data lakes?

- arXiv — Exposing Weak Links in Multi-Agent Systems under Adversarial Prompts